The scarlet tanager alights on a branch, whistles twice, and flies off.

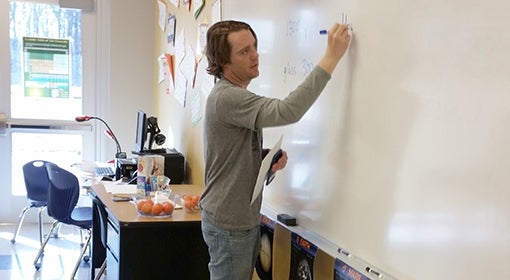

The little red and black bird has no inkling that a tiny AudioMoth recorder just captured its song. Smaller than a deck of cards, the recorder is one of 200 such devices wrapped in Ziploc bags and strapped to trees in Sproul State Forest in central Pennsylvania. The recorders will run continuously for three weeks, until Justin Kitzes, assistant professor of spatial macroecology at Pitt, comes to retrieve them.

Kitzes and his team are working on something far bigger than the little scarlet tanager. With the aid of a Microsoft and National Geographic AI for Earth Innovation Grant worth more than $91,000, they are developing a powerful tool to help ecologists explore all sorts of questions, from when species migrate to how best to fight off extinction.

Kitzes and his team are working on something far bigger than the little scarlet tanager. With the aid of a Microsoft and National Geographic AI for Earth Innovation Grant worth more than $91,000, they are developing a powerful tool to help ecologists explore all sorts of questions, from when species migrate to how best to fight off extinction.

By the end of the one-year grant and with help from the AudioMoth, they will have built an automated classifier with the ability to identify the calls of approximately 600 birds, freely available online. An ecologist or amateur bird enthusiast can upload a sound file and get a list of which birds are present in the recording.

“If you can produce a tool that makes it easy to do something, it affects the field substantially,” says Kitzes. “There are a lot of places right in our backyard here that nobody’s looking at and we fundamentally don’t know what’s happening to biodiversity out there.”

Working with Pitt’s Center for Research Computing, the researchers in Kitzes’s lab convert the recordings into spectrograms that turn each bird sound into a visual pattern. The tanager’s song is a series of long squiggles, whereas the crow’s is a column of short dashes. Once Kitzes’s team programs the machine-learning model, teaching it which image corresponds to which bird, the program can pick out a specific bird’s call using a convolutional neural network—the same technology Google uses to scan photographs for individual features like a dog or flower.

That means that the thousands of hours of recordings collected from Sproul State Forest can be analyzed quickly and easily. It’s an enormous improvement over a typical bird biodiversity survey, which might range from 15 to a mere three minutes of observation, collected just once a year.

“You’re not going to have someone listen to 10,000 hours of recordings,” Kitzes says. “There’s no other way to do it than with some sort of automated tool that will tell you whatever it is you want to know.”

The software’s potential applications are wide-ranging and go far beyond bird calls. Collaborators are already using Kitzes’s model to study amphibians in Borneo and wolf populations in western Washington state.

Kitzes sees the project as part of a fundamental shift in the field of ecology, from relying on direct human observation to using tools like sensors and artificial intelligence to generate crucial data quickly and cheaply. That’s particularly valuable in a field like spatial macroecology, which tends to focus on large-scale patterns of movement and species diversity. Rather than looking at one or several species in a single location, a spatial macroecologist like Kitzes focuses on species diversity on a region- or continent-wide scale, with a particular emphasis on human influences.

The work can be vital to addressing environmental issues and extinction—subjects that have long held Kitzes’s attention. As a PhD student, he used audio recordings to study the feeding behavior of bats in relation to roadways. Before that, he worked for a nonprofit organization dedicated to sustainability. The experiences drove his desire to help scientists tackle the challenge of protecting endangered species.

It’s a perspective that lends his current project a sense of urgency.

“How do we get data where it’s needed, in time to do something?” Kitzes says. “The thing that you’re studying could be going extinct while you’re studying it, or at least changing dramatically. There’s a lot of potential for action if we can figure out how to do it intelligently.

“But we’d better hurry up.”

Breakthroughs in the Making

Healthy Companions

Healthy Companions

Pet ownership can enable more than the warm fuzzies, according to Mary Beth Rauktis, an assistant research professor in Pitt’s School of Social Work. Her team conducted surveys at 30 food banks in Pennsylvania’s Allegheny County and found that people with pets are more willing to go to greater lengths to obtain food both for themselves and their furry friends—helping them to live healthier lifestyles than those without pets. The animals also provided emotional support and reduced isolation. Rauktis now plans to study how pet-focused activities in the home can improve pet owners’ health.

Smells Good

Smells Good

Relief from nicotine cravings could be a sniff away. A study led by Pitt psychology professor Michael Sayette found that smokers who smelled an aroma they found to be pleasant experienced significantly lower urges to smoke than those who inhaled neutral or tobacco odors. The researchers hypothesize that this could be caused by the brain linking smell and memory—a sweet scent associated with a happy memory may provide a useful distraction. The next step: testing whether smell can help smokers quit or reduce their intake.

On Instinct

On Instinct

Robots can be programmed to exhibit lifelike behavior, but just how closely can artificial systems mimic the core instincts and behaviors of living beings? A team led by the John A. Swanson Chair in Engineering, Anna C. Balazs, is working to find out. Researchers discovered that microscopic synthetic structures (in this case, catalyst-coated sheets resembling minuscule crabs) can be induced through chemical reaction to compete and collaborate for resources, much like animals. The work could eventually help microscopic objects like microrobots perform a range of complex tasks in fields from medicine to chemical engineering.

This article appeared in the Fall 2019 edition of Pitt Magazine.